There is a technique called the Gradient Boosted Trees whose base learner is CART Classification and Regression Trees. XGBoost is more regularized form of Gradient Boosting.

Boosting Algorithm Adaboost And Xgboost

3 rows XGBoost is one of the most popular variants of gradient boosting.

. AdaBoost Gradient Boosting and XGBoost. XGBoost computes second-order gradients ie. GBM uses a first-order derivative of the loss function at the current boosting iteration while XGBoost uses both the first- and second-order derivatives.

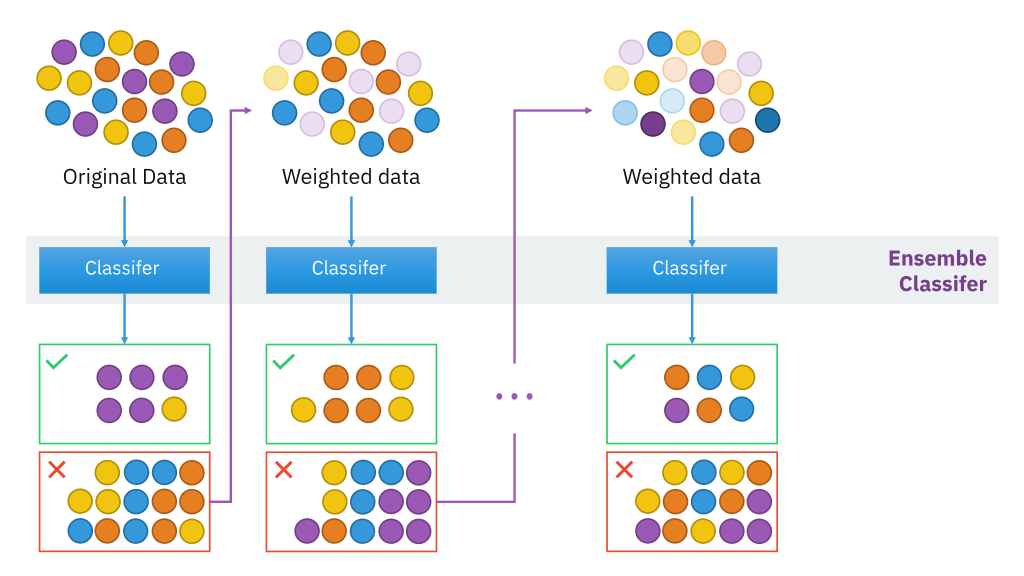

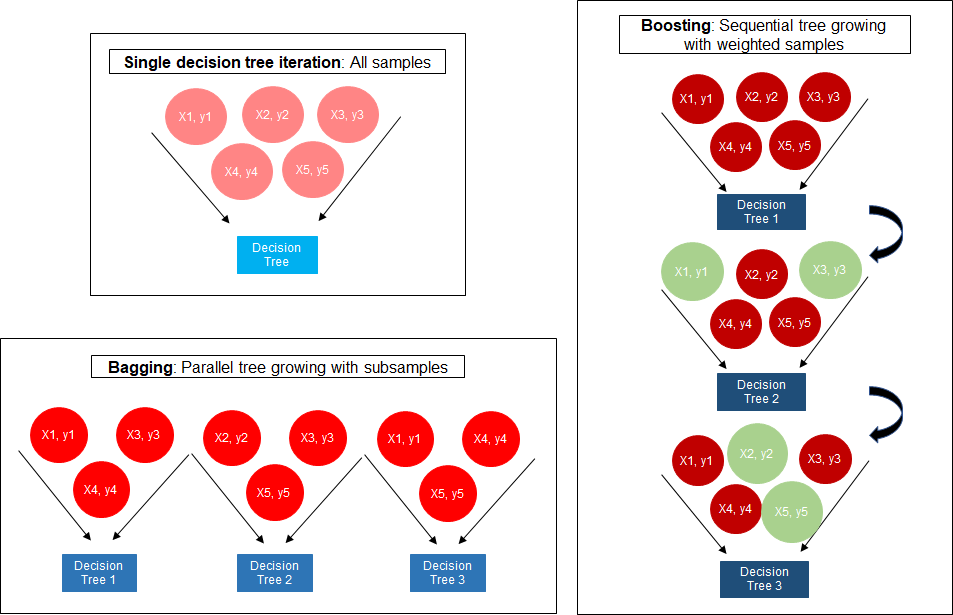

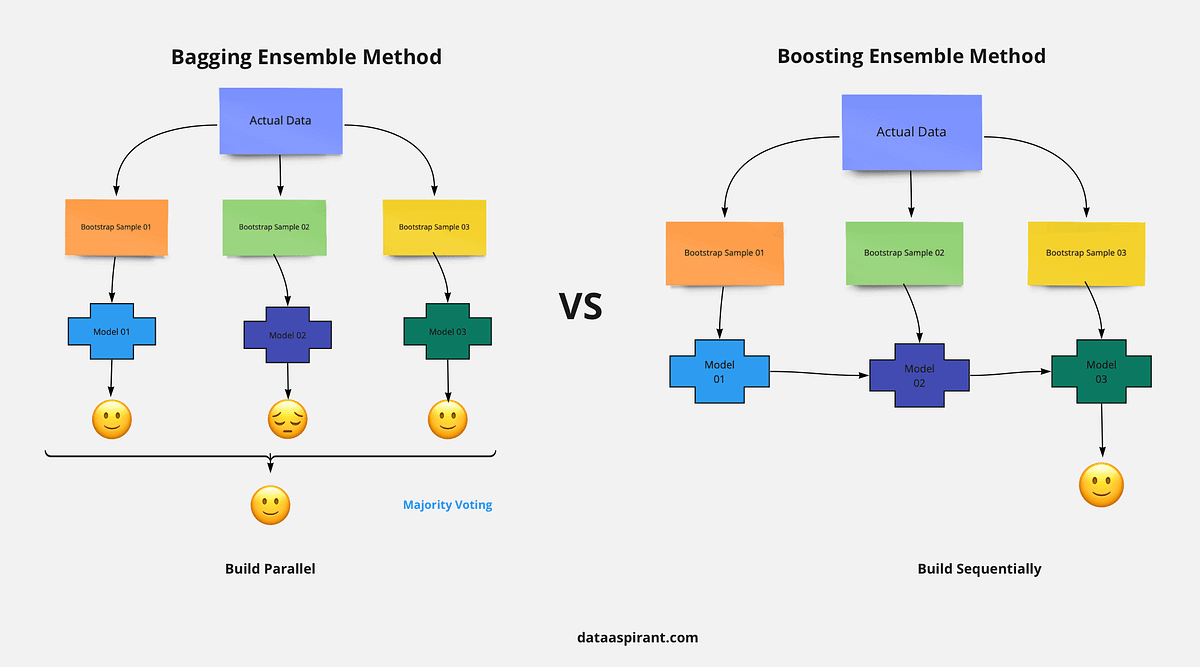

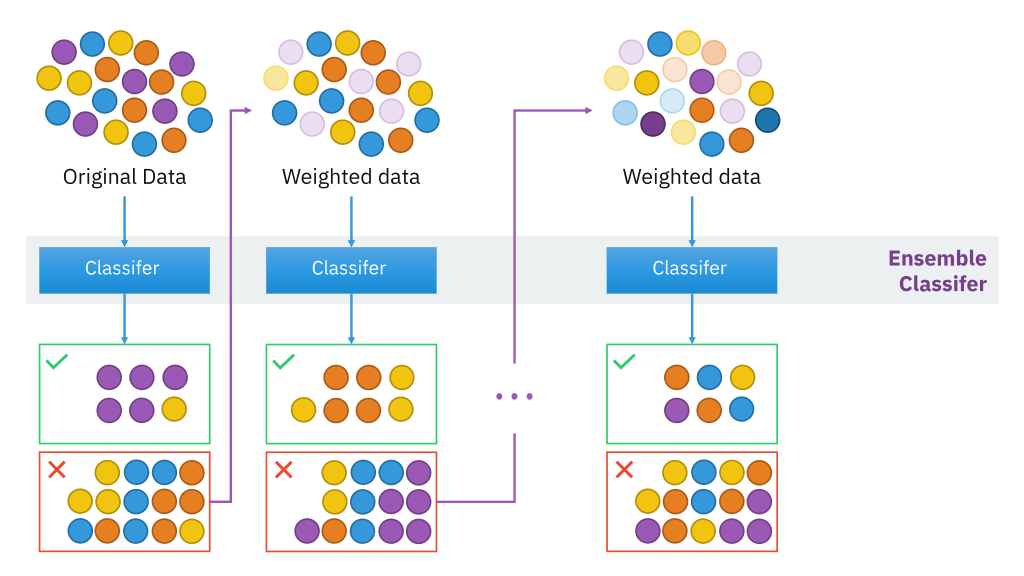

However there are very significant differences under the hood in a practical sense. Boosting is a method of converting a set of weak learners into strong learners. In this post you will discover how you can estimate the importance of features for a predictive modeling problem using the XGBoost library in Python.

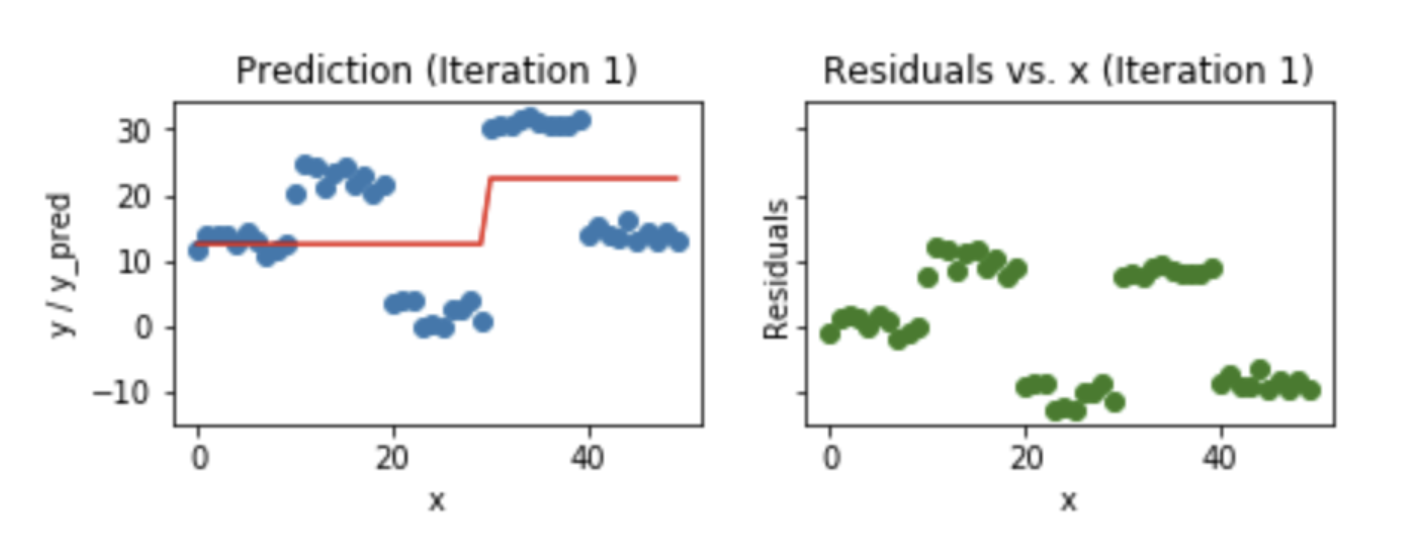

Boosting algorithms are iterative functional gradient descent algorithms. It is a decision-tree-based. Its training is very fast and can be parallelized distributed across clusters.

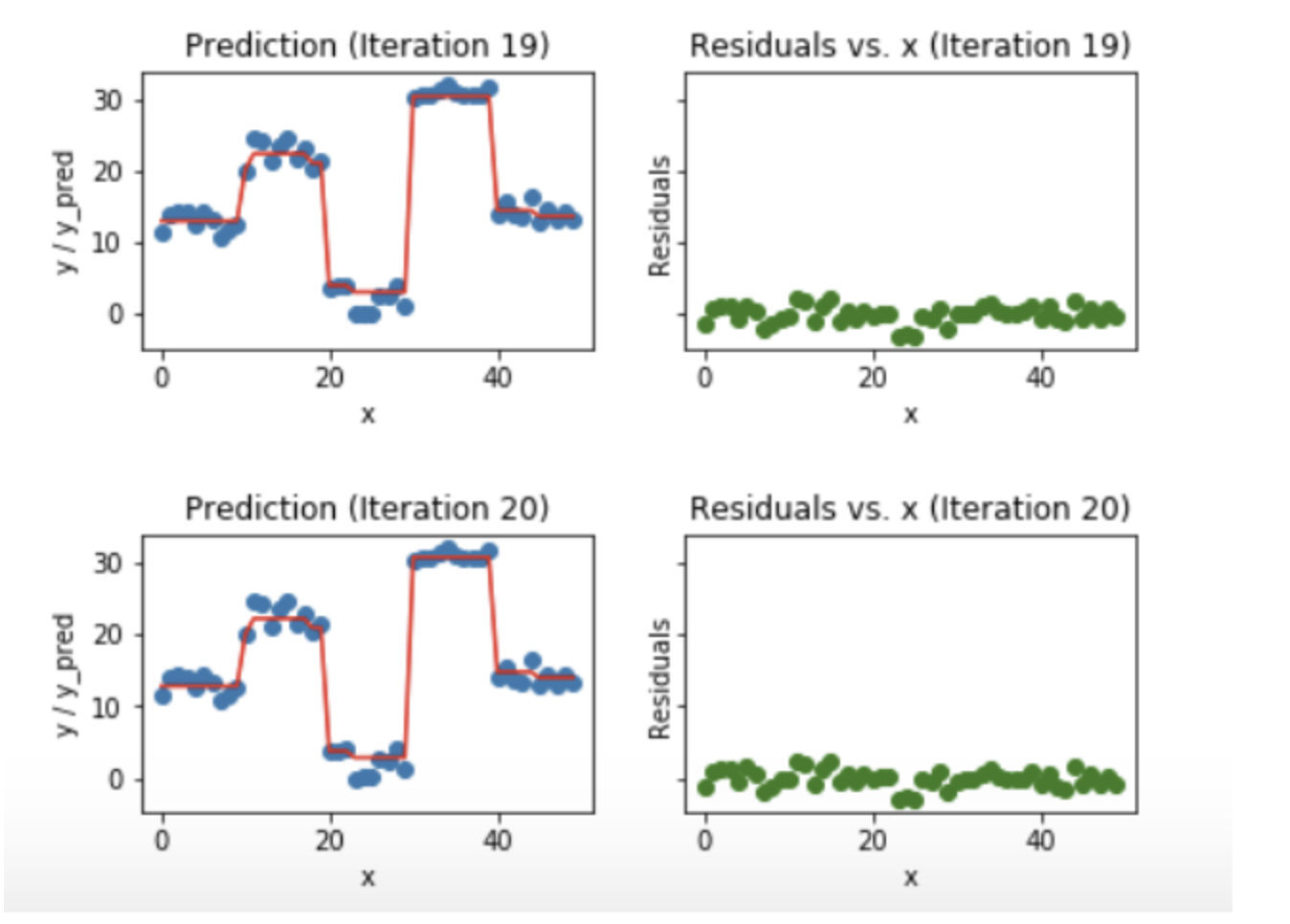

Gradient boosting is a technique for building an ensemble of weak models such that the predictions of the ensemble minimize a loss function. R package gbm uses gradient boosting by default. This algorithm is an improved version of the Gradient Boosting Algorithm.

You are correct XGBoost eXtreme Gradient Boosting and sklearns GradientBoost are fundamentally the same as they are both gradient boosting implementations. Gradient boosting only focuses on the variance but not the trade off between bias where as the xg boost can also focus on the regularization factor. Introduction to Boosted Trees.

The latter is also known as Newton boosting. Share to Twitter Share to Facebook Share to Pinterest. AdaBoost Gradient Boosting and XGBoost are three algorithms that do not get much recognition.

They work well for a class of problems but they do. XGBoost is an implementation of Gradient Boosted decision trees. However the efficiency and scalability are still unsatisfactory when there are more features in the data.

XGBoost delivers high performance as compared to Gradient Boosting. It is based on gradient boosted decision trees. XGBoostExtreme Gradient Boosting is a gradient boosting library in python.

I think the difference between the gradient boosting and the Xgboost is in xgboost the algorithm focuses on the computational power by parallelizing the tree formation which one can see in this blog. The concept of boosting algorithm is to crack predictors successively where every subsequent model tries to fix the flaws of its predecessor. Show activity on this post.

If it is set to 0 then there is no difference between the prediction results of gradient boosted trees and XGBoost. I think the Wikipedia article on gradient boosting explains the connection to gradient descent really well. XGBoost is more regularized form of Gradient Boosting.

Decision tree based algorithms are considered best for smallmedium structured or tabular data. At each boosting iteration the regression tree minimizes the least squares approximation to the. What is the difference between gradient boosting and XGBoost.

A very popular and in-demand algorithm often referred to as the winning algorithm for various competitions on different platforms. XGBOOST stands for Extreme Gradient Boosting. Neural networks and Genetic algorithms are our naive approach to imitate nature.

The algorithm is similar to Adaptive BoostingAdaBoost but differs from it on certain aspects. XGBoost models majorly dominate in many Kaggle Competitions. A learning rate and column subsampling randomly selecting a subset of features to this gradient tree boosting algorithm which allows further reduction of overfitting.

Originally published by Rohith Gandhi on May 5th 2018 42258 reads. Difference between Gradient boosting vs AdaBoost Adaboost and gradient boosting are types of ensemble techniques applied in machine learning to enhance the efficacy of week learners. XGBoost stands for Extreme Gradient Boosting where the term Gradient Boosting originates from the paper Greedy Function Approximation.

In addition Chen Guestrin introduce shrinkage ie. The different types of boosting algorithms are. Gradient Boosting is also a boosting algorithm hence it also tries to create a strong learner from an ensemble of weak learners.

Generally XGBoost is faster than gradient boosting but gradient boosting has a wide range of application. A Gradient Boosting Machine by Friedman. It is a boosting algorithm which is used in various competitions like kaggle for improving the model accuracy and robustness.

XGBoost uses advanced regularization L1 L2 which improves model generalization capabilities. A benefit of using ensembles of decision tree methods like gradient boosting is that they can automatically provide estimates of feature importance from a trained predictive model. XGBoost is faster than gradient boosting but gradient boosting has a wide range of applications.

This tutorial will explain boosted trees in a self. Its training is very fast and can be parallelized distributed. The gradient boosted trees has been around for a while and there are a lot of materials on the topic.

Algorithms Ensemble Learning Machine Learning. XGBoost delivers high performance as compared to Gradient Boosting. Although other open-source implementations of the approach existed before XGBoost the release of XGBoost appeared to unleash the power of the technique and made the applied machine learning.

Gradient Boosting Decision Tree GBDT is a popular machine learning algorithm. In this algorithm decision trees are created in sequential form. After reading this post you.

XGBoost uses advanced regularization L1 L2 which improves model generalization capabilities. It has quite effective implementations such as XGBoost as many optimization techniques are adopted from this algorithm. The base algorithm is Gradient Boosting Decision Tree Algorithm.

Difference between GBM Gradient Boosting Machine and XGBoost Extreme Gradient Boosting Posted by Naresh Kumar Email This BlogThis. AdaBoost Adaptive Boosting AdaBoost works on improving the. Weights play an important role in.

Extreme Gradient Boosting XGBoost is an open-source library that provides an efficient and effective implementation of the gradient boosting algorithm.

Gradient Boosting And Xgboost Open Data Science

Gradient Boosting And Xgboost Hackernoon

Gradient Boosting And Xgboost Note This Post Was Originally By Gabriel Tseng Medium

The Intuition Behind Gradient Boosting Xgboost By Bobby Tan Liang Wei Towards Data Science

The Ultimate Guide To Adaboost Random Forests And Xgboost By Julia Nikulski Towards Data Science

Xgboost Versus Random Forest This Article Explores The Superiority By Aman Gupta Geek Culture Medium

A Comparitive Study Between Adaboost And Gradient Boost Ml Algorithm

0 comments

Post a Comment